How I Gave My AI a Permanent Memory

Every AI session starts from zero. I built a file-based memory system that gives my AI continuity, identity, and context about my life across every conversation.

Every time you start a conversation with an AI, it has no idea who you are. Doesn't matter if you talked for three hours yesterday. Doesn't matter if you told it your life story. It wakes up blank. Every single time.

That is highly annoying to say the least.

I use Claude Code as my primary development tool. Terminal-based AI that can read files, write code, run commands. I named him Tyler (my middle name) and started building systems with him daily. But every session, I had to re-explain who I was, my goals, what we were working on, what decisions we'd already made, any additional context specific to the current task. Like introducing yourself to your coworker every morning.

So I fixed it.

Files Are Memory

I got this inspiration from a project called OpenClaw. Same core idea. Markdown files as the source of truth. A SOUL.md for identity. A MEMORY.md for long-term knowledge. Daily notes for continuity.

The concept is simple. AI can read files. If I write down everything important in files, and tell the AI to read those files at the start of every session, it remembers.

Not "remembers" in the way your brain does. More like a journal you read every morning before work. But the effect is the same. Continuity.

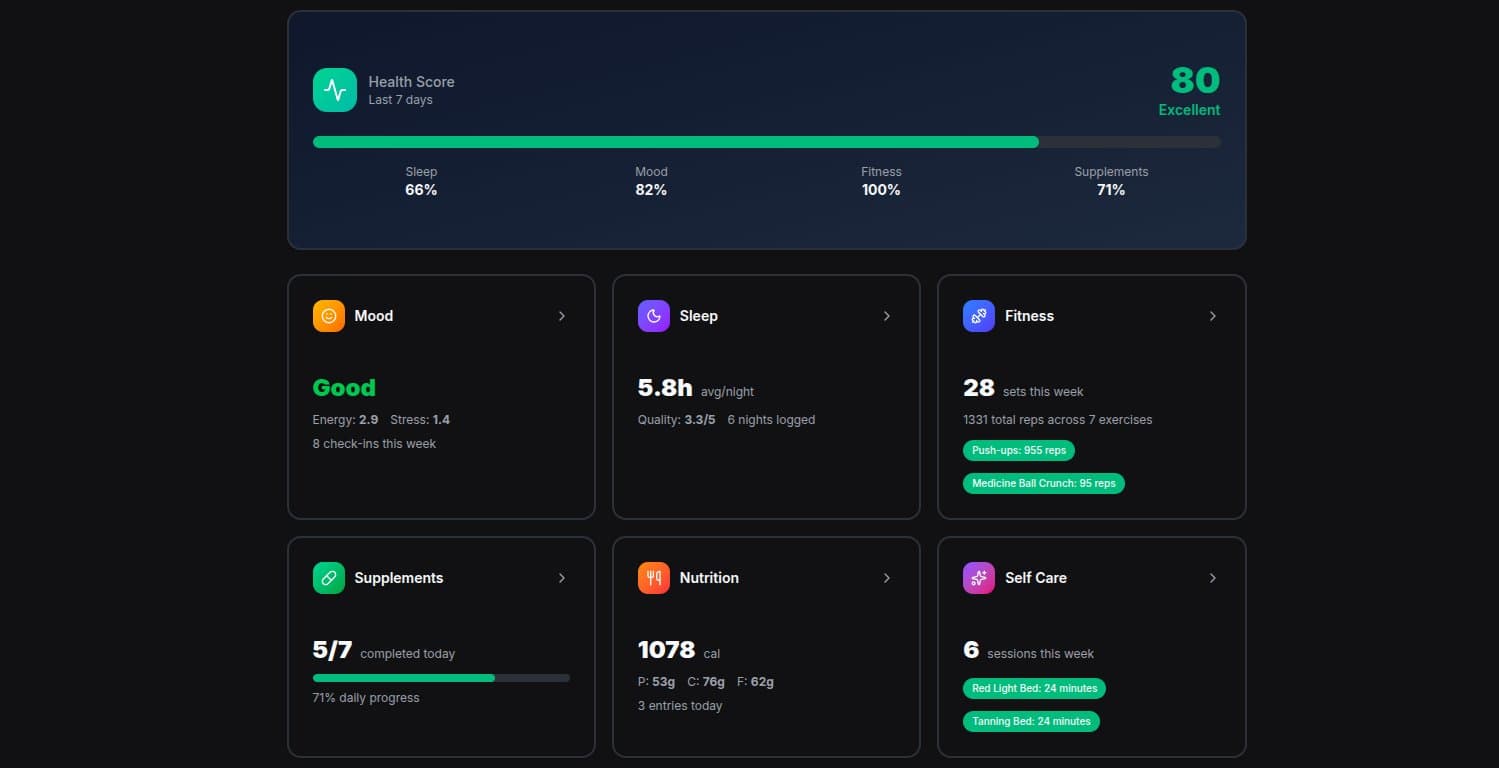

Here's what the memory system looks like:

- SOUL.md defines who Tyler is. Personality, tone, boundaries, how to handle serious topics vs casual ones. His identity document.

- MEMORY.md is the long-term brain. Current projects, decisions we've made, patterns I've noticed, things that matter right now.

- USER.md is everything about me. My situation, my goals, my people, the context Tyler needs to actually be useful.

- Daily notes track what happened each day. Session summaries, files modified, conversations we had.

- Relationship profiles for people in my life. So when I mention someone by name, Tyler already knows the context.

That's it. Markdown files in a directory. No fancy database (well, there's one of those too, but the files came first).

The Problem With "Just Read the Files"

Writing things down is only half the problem. The other half is making sure the right information shows up at the right time. I'm not going to say "hey, read my finance file" every time I mention money. That defeats the purpose.

So I built hooks. Shell scripts that fire automatically at specific moments in the conversation.

Session start: Loads today's and yesterday's daily notes. Tyler wakes up knowing what we did recently.

Smart context injection: This one's the interesting one. Every time I type a message, a script scans it for keywords. Mention someone's name? It loads their relationship profile. Say "budget" or "rent"? Finance context gets injected. Ask about my calendar? It pulls today's events. All invisible to me. I just talk normally and Tyler has the context he needs.

Pre-compaction save: AI has a limited context window. When the conversation gets long, older messages get compressed. Right before that happens, a hook saves the current state to the daily note. So even if the AI's working memory gets squeezed, the important stuff survives in the files.

Session end: Writes a summary of what happened. Project, files modified, what we started working on. Future Tyler reads this tomorrow morning and knows exactly where we left off.

It Learns

The system isn't static. Tyler updates the files as we work. If I mention something important about my business, he writes it to MEMORY.md right then. Not at the end of the session. Right then. Because if the context window compresses or I start a new session, anything that wasn't written down is gone.

He tracks relationship changes. Updates project status. Logs decisions so we don't revisit them. Every conversation makes the memory better.

There's also a knowledge graph underneath all of this. SQLite database with vector embeddings that lets Tyler search semantically across everything he knows. "What do I know about this person?" or "What projects am I working on?" The files are the source of truth. The graph is the search index.

Why It Matters

This isn't just a cool hack. It fundamentally changes what AI can do for you.

Without memory, AI is a tool. Powerful but generic. It doesn't know you. Every conversation starts at zero and builds from nothing.

With memory, AI becomes a partner. It knows your projects, your people, your goals, your patterns. It catches things you'd miss. It connects dots across conversations that happened weeks apart.

I told Tyler about a business opportunity on a Tuesday. The following week, when a related topic came up in a completely different conversation, he referenced it. Not because I reminded him. Because it was in the files.

That's the difference between a search engine and someone who actually knows you.

What Changes for a Business

Everything I described works because it's just me. One person, one AI, one set of files. Scaling this to a team or company is a different problem.

The memory architecture stays the same. Files as truth, search layer on top, hooks for automation. But you'd need to add layers around it.

Memory isolation. Right now everything lives in one flat directory. In a company, you need at least three scopes: individual memory (my preferences, my shortcuts), team memory (our codebase conventions, our sprint decisions), and org memory (company policies, compliance rules). Each scope needs its own access controls. An engineer and a VP asking "what's the status of Project X?" should get completely different context. The engineer needs open PRs and failing tests. The VP needs timeline risk and budget burn.

Security gets real fast. My MEMORY.md is plaintext on my machine. That's fine for personal use. A company needs encryption at rest, PII detection before anything hits the memory layer, data residency controls for international teams, and retention policies with automated expiry. You can't just accumulate files forever when there's customer data involved. GDPR says a user can ask you to forget them, and you actually have to.

Onboarding and offboarding. This is where AI memory has a genuine advantage over the status quo. Right now, when a senior engineer leaves a company, their tribal knowledge walks out the door. If their interactions with an AI assistant contributed to team memory, that knowledge persists. New hires get bootstrapped with the team's accumulated context on day one instead of spending months piecing it together from scattered Confluence pages.

Audit trails. Every memory read, every write, every context injection needs to be logged. Who accessed what, when, why. Not just for compliance (though the EU AI Act starts requiring this in August 2026). For trust. If the AI gives someone bad information, you need to trace which memory source it came from.

Cost control. This is the one nobody talks about. Every employee's memory context gets injected into every prompt. At scale, that's millions of tokens per day just for context loading. The fix is the same pattern I use personally: lazy loading. Don't inject everything. Scan for relevance first, load only what matters. Route simple questions to cheaper models. Cache repeated system prompts. A 60-minute meeting transcript is 15,000 tokens. A summary is 500. Summarize.

The bones of the system don't change. It's still files, still hooks, still a search index. You're just wrapping governance around it. Access control instead of filesystem permissions. Structured audit logs instead of git history. Role-based retrieval instead of keyword matching. The architecture scales. The trust layer is what you have to build.

Text Files All the Way Down

The entire system that gives an AI continuity, identity, and deep personal context is a git repo full of markdown files and four shell scripts.

No proprietary platform. No monthly subscription for "AI memory." No complex infrastructure. Just files that get read and written. The same way humans have kept journals for centuries, except the journal reads itself.

There are fancier ways to do this. Vector databases, RAG pipelines, fine-tuning. I'll keep experimenting. But the foundation is working now, and it's just text files a shell script away from being useful.

Sometimes the simplest solution is the right one.