Using AI to Generate 1.4M Leads

How I built a proprietary full contractor lead generation platform using AI as a solo developer. Custom scraping, data enrichment, and a CRM dashboard.

I work fulltime as a wellness consultant a tanning spa. I do food prep for a BBQ catering business 3-5 nights a week. I sell mead at a couple farmers markets for a local meadery. And somewhere between all of that, I built a lead generation platform that has scraped 1.4 million contractor leads across the united states.

I have a client that sells tools to contractors and needed people to cold call. Not the junk leads you buy from data brokers that are half disconnected numbers and dead businesses. Real, licensed contractors with verified phone numbers, organized by trade.

It initially took me about a month to find 100,000 leads. Recent AI advancements allowed me to find 400,000 leads within a week.

What It Actually Does

Contractor licensing is handled completely differently by every state, many of them even handle it at the local level. That licensing data, business name, license type, address, sometimes phone and email, lives in various databases, csv files, and other completely random sources that are sometimes not even publicly accessible. Some of these sources I'm not sure anyone knows exist aside from a person in an office somewhere that fills out spreadsheets for a living. Until my AI agent ransacked the internet.

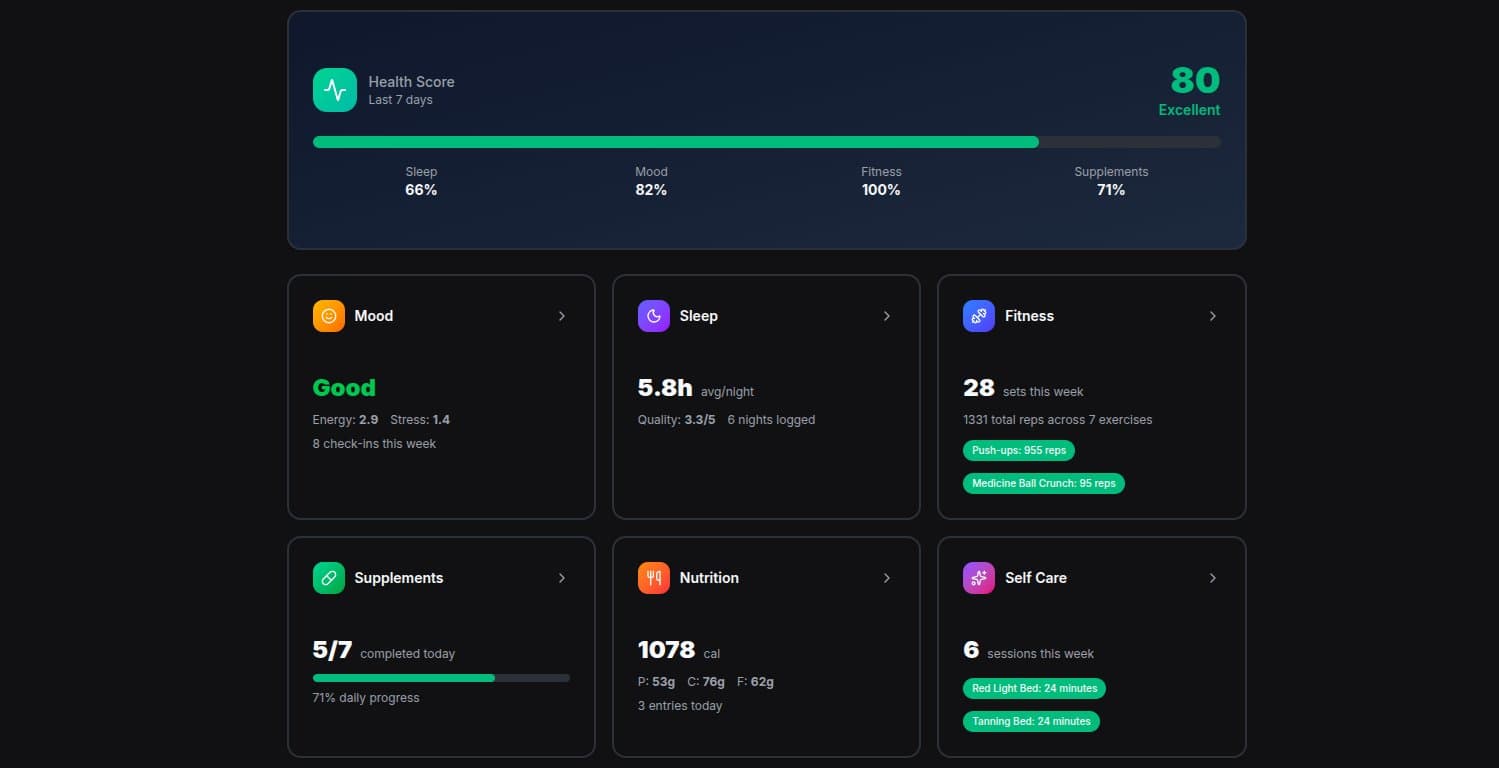

The platform scrapes all the disgusting, messy data it can find, cleans it up, searches internet for missing phone numbers, and feeds everything into a CRM where my client can filter, search, assign, and export leads for his sales team.

The pipeline looks like this:

- AI agent searches the internet for potential sources like an addict turning their house inside out for the drugs they thought they lost

- AI agent then researches and verifies each source, analyzes the difficulty level of acquiring the data, tests web pages and structure if necessary

- AI agent then writes python scrapers for each source to pull data

- Data gets normalized into one standard format (every state structures theirs differently)

- Phone enrichment fills in missing contact info through internet search

- Cleaned data imports into a database

- The CRM frontend lets users filter, search, assign, and export

- Scripts automate invoicing per batch

This seems clean on paper. Getting there was not.

The Hard Parts

Every Source Is Its Own Nightmare

This was one thing that made me want to smash my forehead into the desk. There is no standard format for contractor licensing data. None. Texas organizes by county. Florida organizes by license category. New York City alone has 3 separate databases spread across different departments. North Carolina's search requires querying all 855 zip codes with a 2-mile radius.

Some sources use modern APIs. Some have 1990s-era ASP.NET forms with server-side pagination that look like they were built before I was born. Some offer bulk CSV downloads. Some have CAPTCHA protection.

Every single source needed its own scraper with its own logic. But they all had to output the same JSONL format so the import pipeline could handle them. Dozens of scrapers, dozens of edge cases, one format out the other end.

Sites That Don't Want You There

Some of these sites actively detect and block scrapers. Fair enough. The solution was VPN rotation, cycling IP addresses to stay under the radar. Rate limiting was critical. Hit a site too fast and you're blocked. Too slow and the scrape takes weeks.

For JavaScript-rendered sites (most state licensing portals), I used Selenium to run a real browser. BeautifulSoup handled the simpler static HTML sites. Some sources needed both. Selenium to navigate through search forms, then BeautifulSoup to parse the results.

It's a cat-and-mouse game. I got pretty good at it. AI made it a hungry hippo game.

The Phone Number Problem

Here's the thing about licensing data. It sometimes includes business name and address but not phone numbers. And if your client needs to cold call people, no phone number means no lead.

The fix was a search script. For each business, run a query with the name and state, parse the results for a phone number. This runs through parallel workers, multiple processes hammering away simultaneously.

Hit rates vary wildly. Some sources had nearly complete phone matches. Others had stale or just whack data that dropped below 50%. We couldn't use the lead unless we were certain we found the correct phone number. Some enrichment jobs are massive. Like, run-for-days-in-the-background massive.

Deduplication

The same contractor can show up in multiple databases. A general contractor might operate in 3 counties. Three records, same business. The dedup pipeline exports all current leads from the production database, then checks each new import against existing records. Business name, address, and phone number all get used as matching signals. It's not glamorous work but it's the difference between selling clean data and selling garbage.

How AI Made This Possible

Building dozens of scrapers for different state websites, each with unique data formats, anti-scraping measures, and edge cases? That's a team. That's months.

I don't have a team. I have an AI Agent open in a terminal between shifts.

I'd describe what I needed. "Scrape Florida's licensing portal. It uses JavaScript rendering. I need business name, license type, license number, address, phone, and email. Data is organized by county and license category."

AI agent would write the scraper, handle the pagination, build the data normalization, and create the import script. I'd test it, hit some wall I didn't expect, fix the edge cases, and move to the next state.

Now the AI agent actually does all of the research to find new sources. It also figures out how to acquire the data, builds and tests the scrapers if needed, updates the code and debugs the script if it hits any errors.

The CRM itself is a full Next.js application with Convex for the real-time backend, Clerk for auth, Stripe for invoicing, and role-based access control for admin, manager, and sales roles. That's a lot of moving parts for one person. AI builds all of this as well, using skills I had it build itself after researching the most up to date versions and best practices.

The Business

My client pays per lead batch. He requests leads for trade categories, I scrape and enrich the data so he can export to CSV, and then invoice through Stripe.

The potential here is bigger than one client. The same data and infrastructure could power a SaaS product. API access for CRM integrations. Automated monthly refreshes. Lead scoring. Right now it's a service. The bones for a product are already there. With an AI product manager.

This is what a single person can build now. Not a prototype. Not a demo. A production system doing heavy data processing at scale for a real client.

1,400,000 leads and counting. One developer.